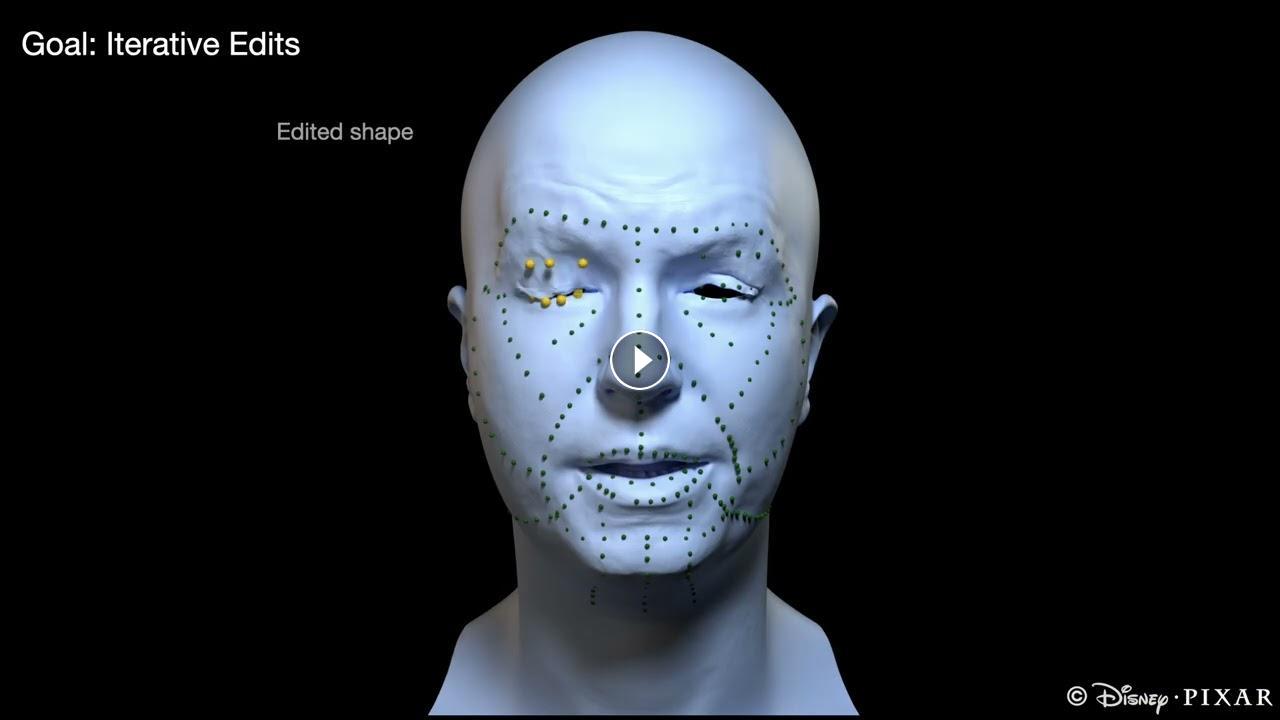

Facial animation is one of the most labor-intensive aspects of animation and VFX, as traditional rigging consumes weeks of expert time and forces animators to spend countless hours manipulating hundreds of controls to achieve varied expressions. This technical complexity creates a barrier between artistic vision and execution, limiting creative exploration and iteration. In this paper, we introduce CANRig, a fully automated neural facial rigging approach that simplifies the process of creating and editing facial poses by benefiting from global correlations learned from data. Unlike existing neural face models that either sacrifice local control or demand extensive manual region setup, our method introduces continuous local control through a novel conditioning mechanism that operates on a variable region. By modeling deformation as cross-attention between control handles and mesh vertices—modulated by a user-defined region—we enable seamless transitions from precise local adjustments to broad global changes. We further expand our method with a shape-preserving workflow that enables iterative edits, guaranteeing that changes remain untouched even as controls are reconfigured. Our method delivers the best of both worlds: the automation and naturalness of neural methods with the granular control that professional animators demand, and we demonstrate its effectiveness across multiple applications in both animation and high-end visual effects pipelines.

Publication Link: https://studios.disneyresearch.com/2026/04/04/canrig-cross-attention-neural-face-rigging-with-variable-local-control/

- Category

- CG VFX & Misc